Why Causal AI Models Outrun Bayesian Networks

Introduction

How are Causal AI models different from Bayesian networks?

The two types of models have some superficial similarities, but they also have significant differences. Bayesian networks (BNs) simply describe patterns of correlations between variables. Causal AI models capture the underlying processes that drive those statistical relationships.

This paradigm shift makes Causal AI models more flexible, versatile, and powerful than Bayesian networks..

What are Bayesian networks?

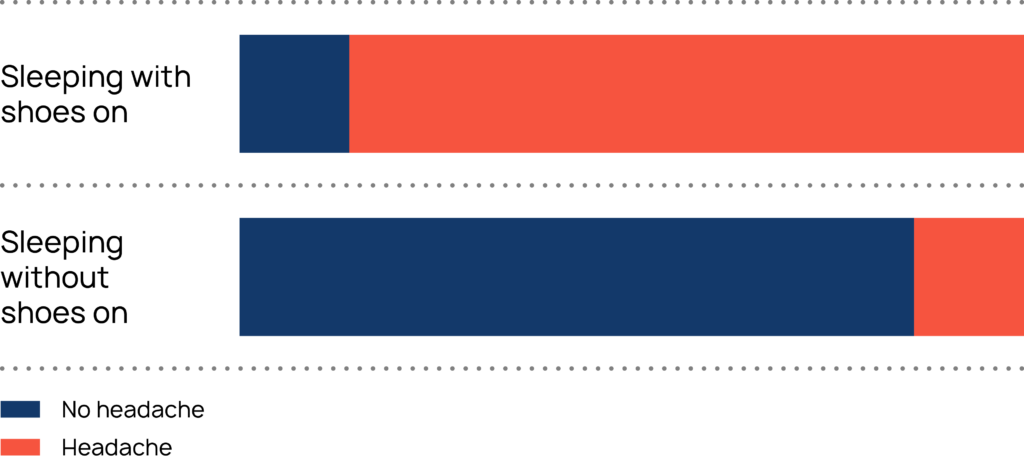

Suppose we come across data describing people’s sleeping habits. We find that there’s a strong correlation between falling asleep with shoes on and waking up with a headache (Figure 1). BNs summarize correlations (or at least our best guesses about them) in a neat graph.

Figure 1. A visualization of our dataset, in which falling asleep with shoes on strongly correlates with waking up with a headache.

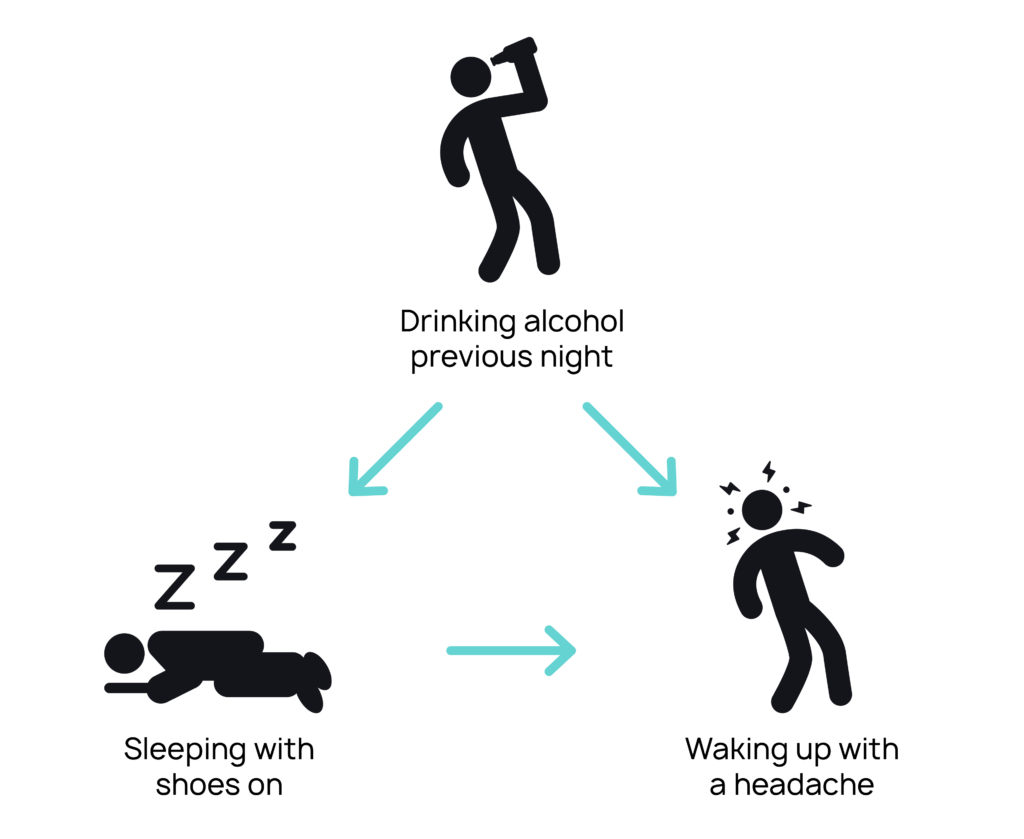

The BN in Fig. 2 tells us that both sleeping with shoes on and waking up with a migraine are correlated with drinking the night before, since there is a path between the variables. It also says that conditional on us knowing that someone was drinking the night before, knowing that they slept with their shoes on tells us absolutely nothing extra about whether they have a headache the next morning (this is called “conditional independence”). We can read off the conditional independence relationship by noticing that drinking alcohol “blocks” the pathway from shoe- sleeping to headache.

BNs help to draw conclusions when more data becomes available (via “Bayes’ theorem”), for example about what someone was doing on the previous night.

Causal AI models

BNs sound useful! What’s the catch? The core problem is that Bayesian networks are blind to causality. This key, missing ingredient makes BNs very limited when it comes to more sophisticated reasoning and decision-making challenges.

Causal AI is a new category of machine intelligence. Causal AI builds models that are able to capture cause- effect relationships while also retaining the benefits of BNs. We set out 5 ways in which this sets apart Causal AI models from Bayesian networks.

1. Causality vs correlations

It’s obvious to us humans that a heavy night of drinking causes folks to both fall asleep with their shoes still on and wake up with a headache the next morning. BNs can’t understand this, Causal AI models can.

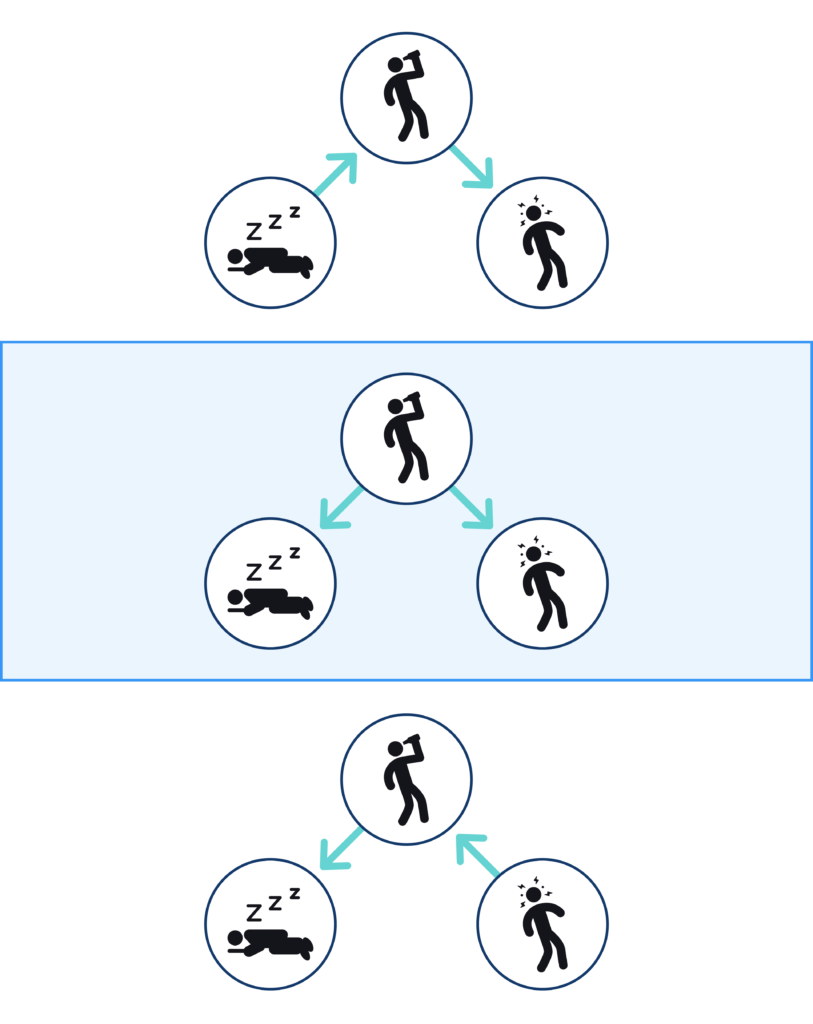

Many BNs are all statistically compatible with the data, but only one BN corresponds to the genuine causal relationships in the system (Figure 3). As a result, it’s always left ambiguous whether your BN is a good causal model or not.

And the overwhelming chances are that your BN is not a good causal model. The number of possible BNs grows exponentially as the number of features increases. With, say, 20 variables in your data, there’s effectively zero chance of randomly stumbling across the true causal model. This means you’re using a BN that’s making bad modeling decisions.

Figure 3. Lots of BNs are statistically compatible with the data, but only one of these represents the true causality in the system. It’s always left ambiguous whether your BN is a good causal model or not.

AI researchers sometimes refer to the BN corresponding to the true causal structure as a “disentangled factorization”, as it is the only model that separates out all the correlations in the data into the underlying causal mechanisms that actually generate those correlations.

2. Advanced human-in-the-loop and extra quantitative sophistication

Causal AI models are not just special “causal BNs” — they also contain extra layers of information and are more compatible with other AI systems and humans.

How strong are causal relationships between variables? How might they change over time? What kind of noise or background conditions influence those relationships? How does context impact the system? Causal AI models can encode answers to all these questions.

Causal AI models embed other AI and machine learning algorithms that can help with answering these questions. There is also a lot of scope for human users to provide input on any or all of these questions. None of this can be said of BNs, where human-machine interaction is limited to selecting features and orienting arrows.

3. Physical vs subjective interpretation

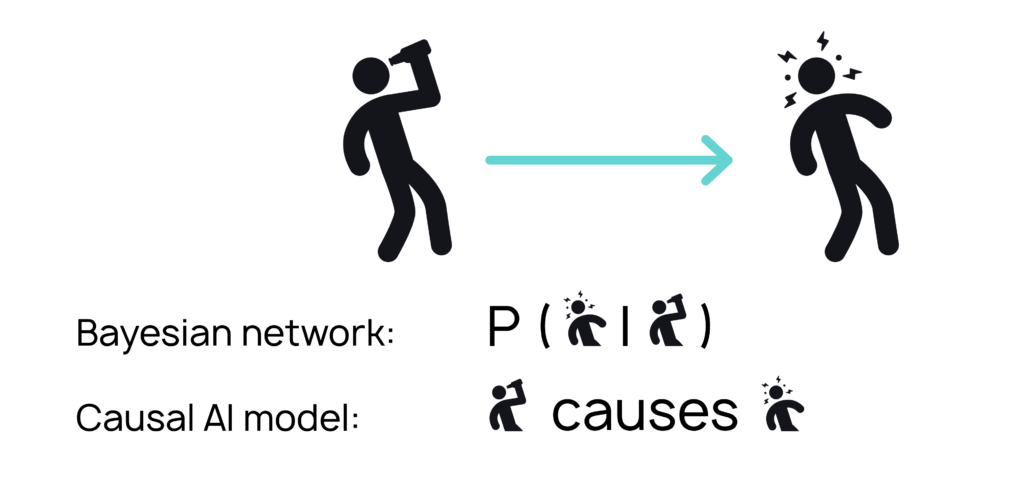

Causal AI models look similar to BNs but the two have totally different interpretations. The arrows in BNs stand for probabilities, whereas in Causal AI models they imply causal relationships.

Figure 4. Bayesian networks and Causal AI models have very different interpretations.

Here’s Judea Pearl, one of the pioneers of Causal AI, describing the difference in how the two types of models are interpreted: “we finally had a mathematical object to which we could attribute familiar properties of physical mechanisms instead of those slippery epistemic probabilities… with which we had been working for so long in the study of Bayesian networks.”

4. Simulated experiments

Causal AI models tell us what will happen to the system

if we “wiggle” or “intervene” on one of the variables. For instance, what happens to the incidence of morning migraines if we somehow forced people to sleep with their shoes on? The answer is obvious to us: nothing happens. But BNs can’t model this and so predict the wrong answer.

Causal AI uses a calculus that we can implement in code (“Do-calculus”) that automates how interventions propagate through the causal system to impact other variables. This goes way beyond anything that can be computed by a BN.

This has game-changing implications for applied AI. The “wiggling” we just described is essentially an experiment. Causal AI can simulate experiments when it’s impossible or impractical to run a randomized controlled trial or A/B test. It’s the foundation for AI-enabled decision-making. Learn more about interventions in our blog.

5. Counterfactual insights

Causal AI models are able to reason about “counterfactuals” — hypothetical scenarios about what might have happened in some alternative history. For instance, if the closure of hospitality last year had been extended, how would public health have been impacted? Surprisingly, counterfactuals are an extremely powerful tool for generating insights about the real world. Learn more about why counterfactuals are transformative for enterprise AI in our white paper.

So, Causal AI models outrun Bayesian

networks in 5 crucial respects

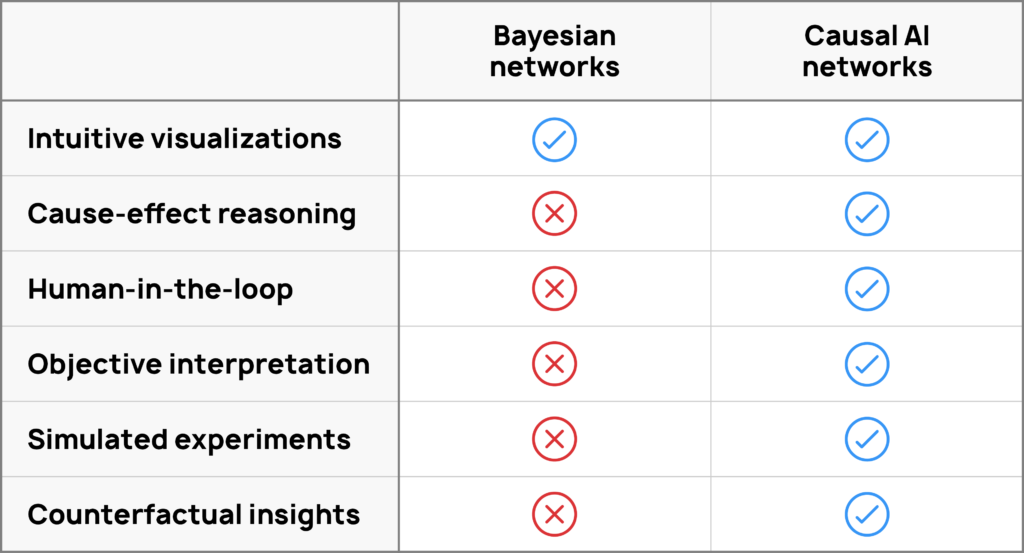

Figure 5. A direct comparison of Bayesian networks and Causal AI models, illustrating some of the clear-cut advantages of causal modeling.

You might be left with a nagging question — how do you discover these causal relationships in the first place? Check out our explainer on causal discovery algorithms to find out. And if you would like to take a deeper dive into the technical differences between Bayesian networks and causal models, read our tech report.