Digital Knowledge Workers

Automate Advanced Knowledge Work.

Across Systems. Deployed Anywhere. 5x ROI.

Customers automating their workflows

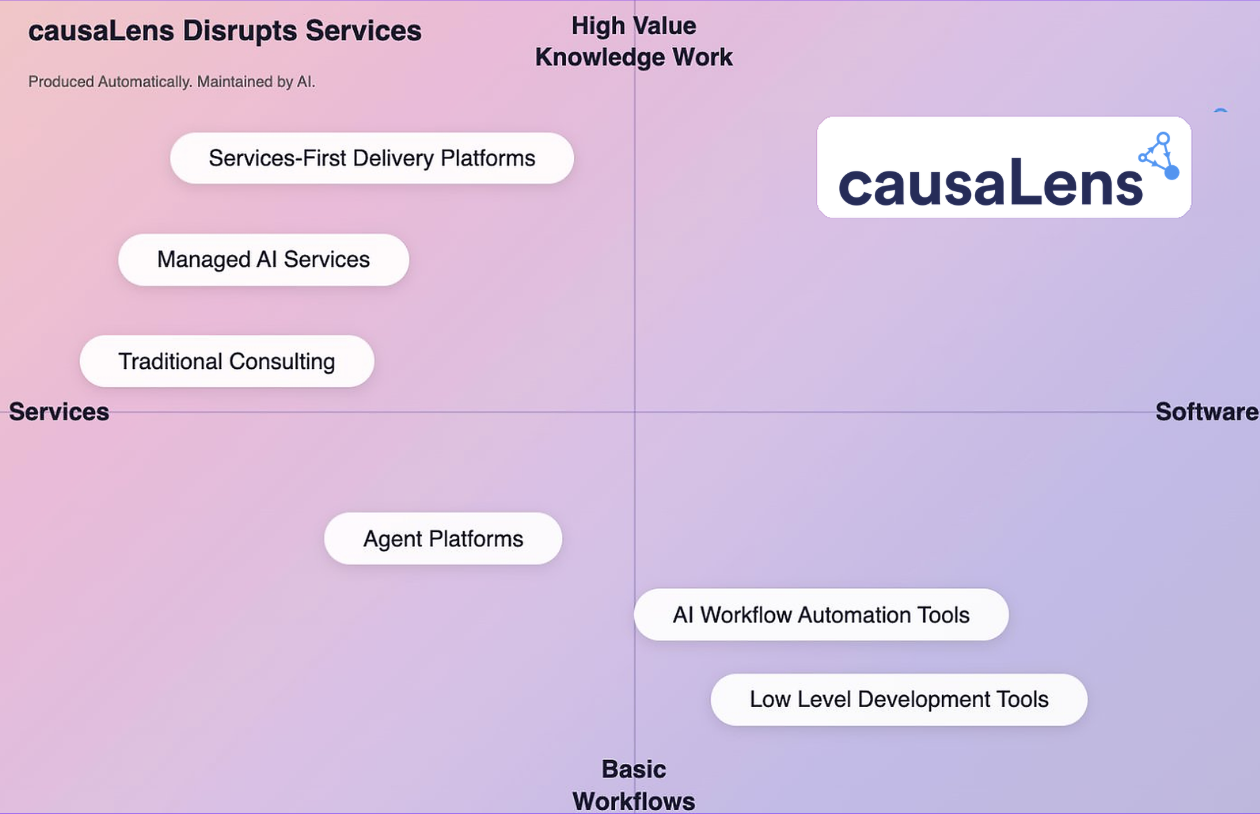

Reliably Automating Knowledge Work is Hard

- Talent Scarcity

People who can reliably build & deploy Digital Knowledge Workers, multi-agentic systems that automate knowledge work, are rare, expensive & constantly getting poached.

Building in-house is a multi-year bet most enterprises can't afford. - Consultancy Misalignment

Consultancies talk AI but struggle to cannibalize their billable-hours model.

And most lack the technical depth to build production-grade agent systems anyway. - Tech-solution gap

No shortage of "shallow" agentic AI tech and fancy demos.

Massive shortage of reliable multi-agentic automations actually running in the enterprise.

causaLens Automates High-Value Knowledge Work. Reliably. Quickly. Scalably.

How It Works

Digital Knowledge Workers are multi-agentic systems that automate repetitive workflows & processes.

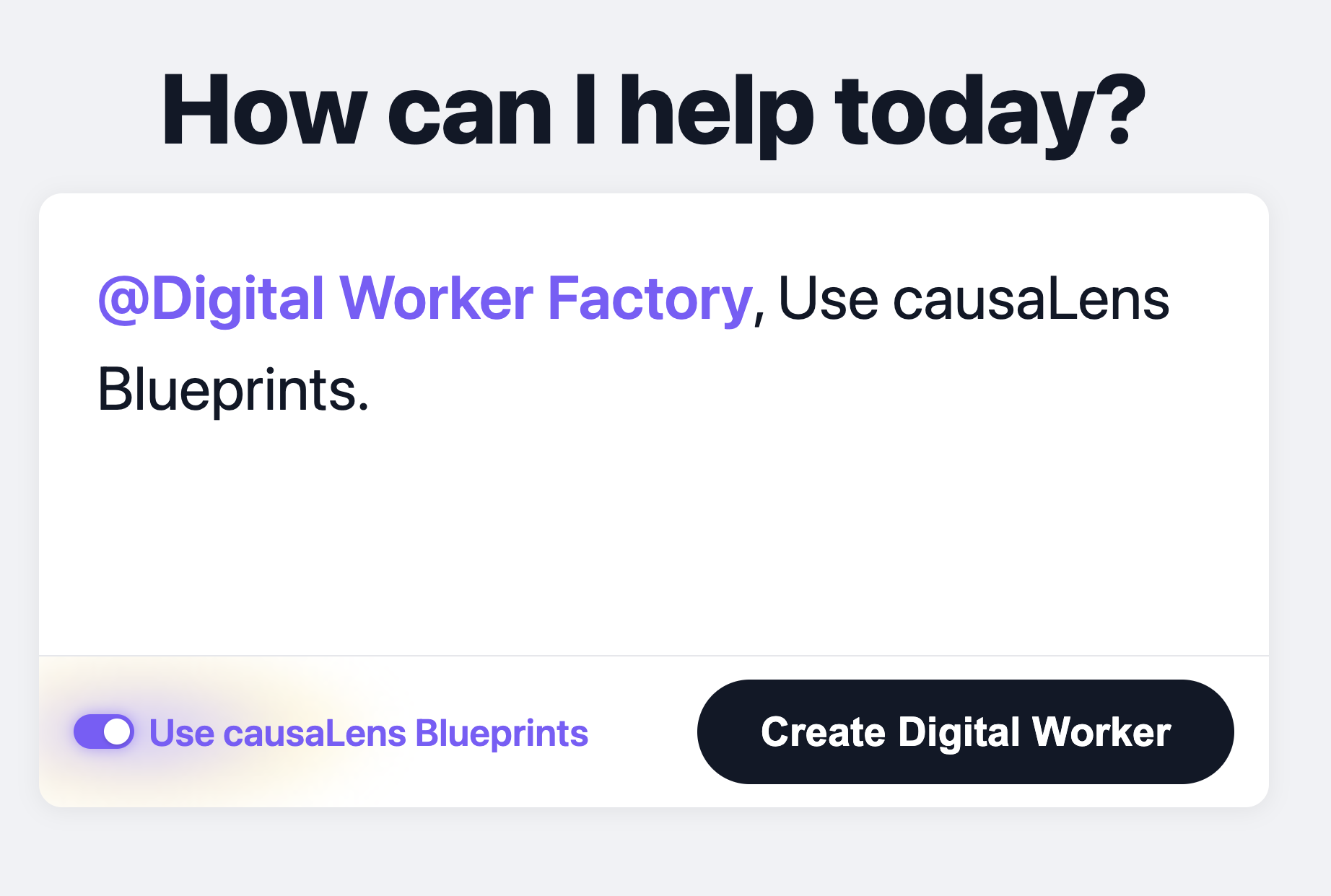

Blueprints

The fastest path from idea to live Digital Workers.

- Pre-built: Each blueprint is a pre-built digital worker with worked-out examples, pre-configured integrations, and proven playbooks for specific enterprise processes.

- No blank canvas: 80% ready out of the box, 20% to be tuned for your needs.

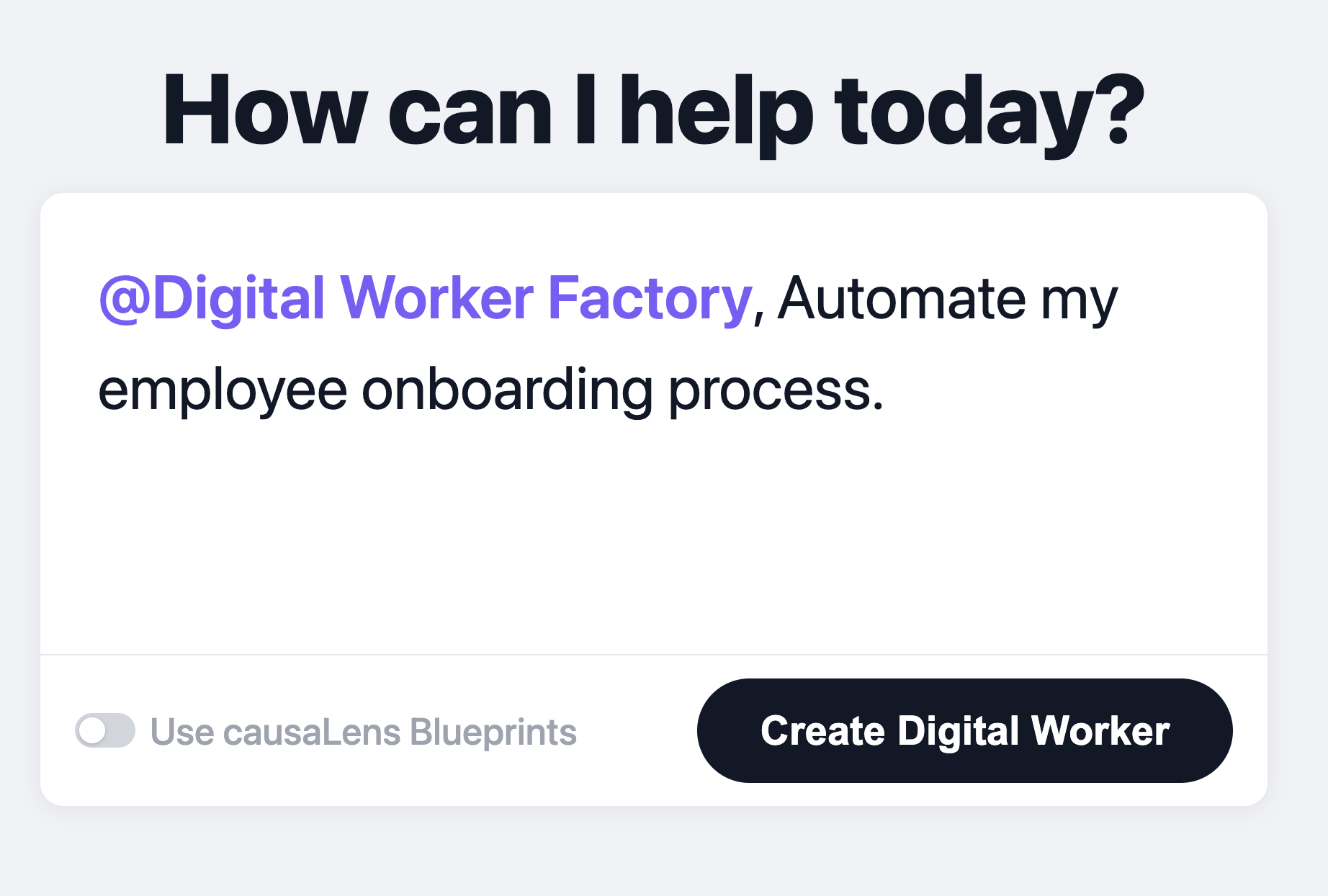

Factory

Your platform (production line) for building Digital Workers.

- Assembly: Combines your spec/requirements with our bluerpints to rapidly put together your multi-agentic workflow.

- Deployment-ready: Each worker is delivered in a docker image that can be deployed anywhere.

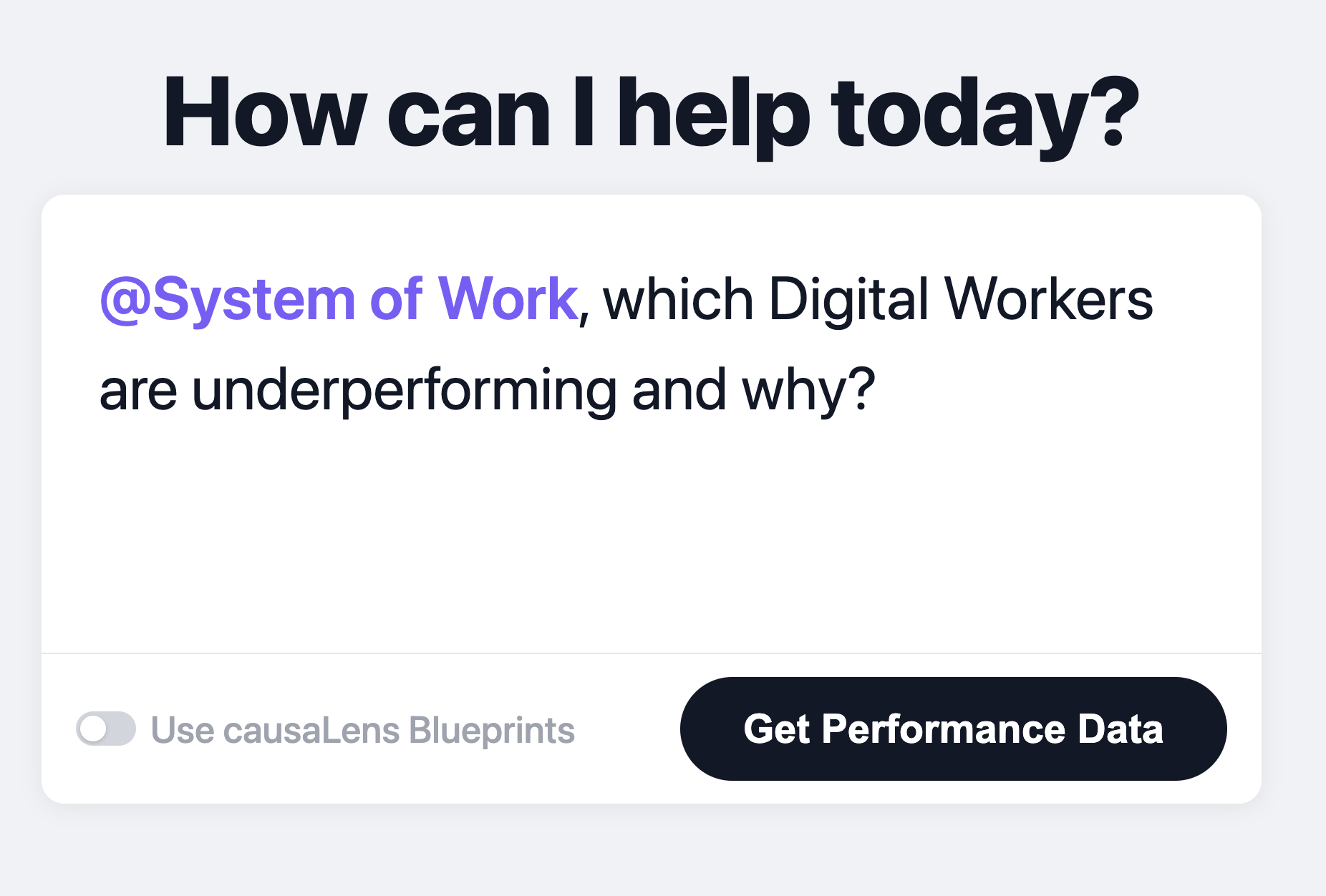

The System of Work

The "HR platform" of your Digital Knowledge Workers.

- Governance & compliance: Every Worker operates with your guardrails with full audit trails and automated evaluation.

- Always-on monitoring: Real-time alerts when human input is needed or when performance drifts.

causaLens Reliability.

The difference between fancy AI demos & real ROI.

We have pioneered dozens of reliability features, including:

- Causal Reasoning

Most AI agents reason via correlations. Our Digital workers reason via causation. Digital Workers build causal models on your data to surface why things happen, and suggest interventions. - Humans-in-the-loop & monitoring systems

Automatically add human-in -the-loop controls & approval stages.

Monitor your Digital Workers' performance & actions in real time.

Humans are always in control. - Sophisticated Scoring and Judging

Digital workers combine advanced quantitative and qualitative scoring, using Python for deterministic validation and LLM-based judgment, making them far more sophisticated than simple agentic systems. - Self-Healing & Continuous Improvement

Digital Workers learn the way humans do, by watching how experts handle exceptions, navigate tradeoffs, and get better with every decision.

Real results: How our clients thrive with causaLens.

Partners we integrate with: